My AI “Dev Team” Built a Python Backend in 7 Days. I Wrote Zero Code.

Claude Code became my lead developer, architect, and debugger — all I did was explain what I needed in plain English.

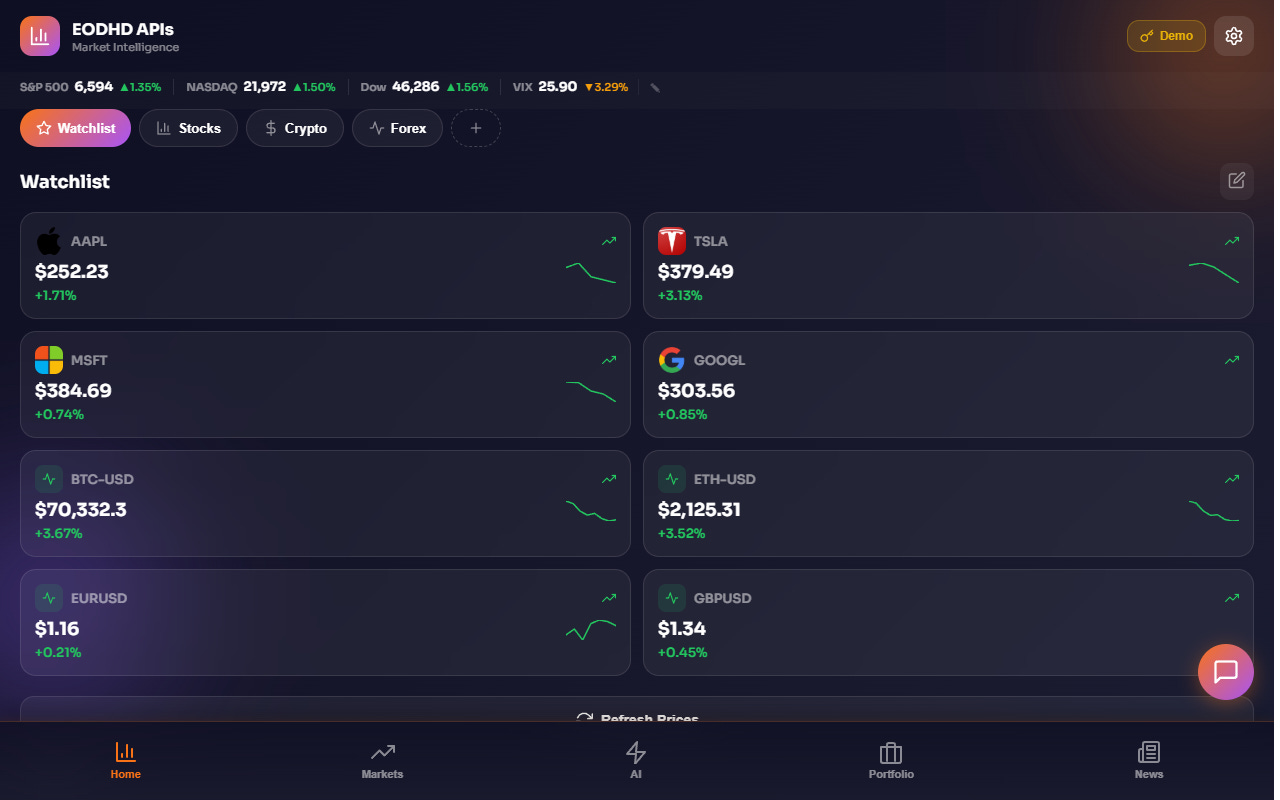

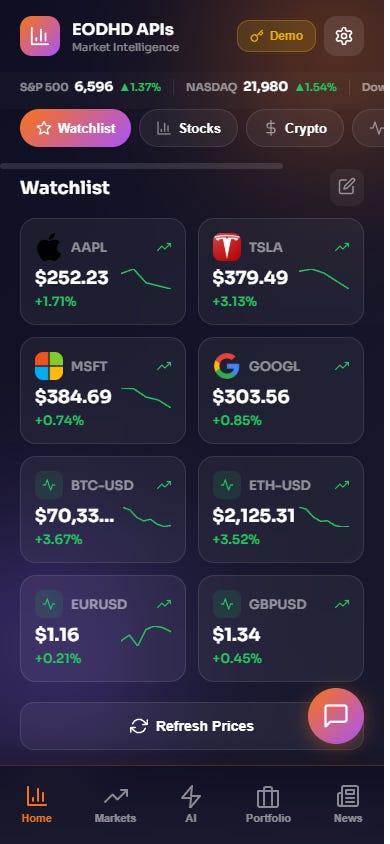

The finished product: real-time stocks, crypto, forex data flowing through an AI-built backend. All powered by EODHD APIs.

In brief: A COO with zero Python knowledge got AI (Claude Code) to build a complete async Python backend in 7 days. The backend streams real-time data from EODHD APIs — stocks, crypto, forex, fundamentals, news — handles user auth, payments, and 40+ API endpoints. It runs on a $24/month server and powers the free Telegram Mini App. Here’s every prompt, every crash, and every lesson.

Hi, I’m Nick — COO at EODHD APIs. In Article 1, I told you how I decided to build a fintech app alone using AI, despite having zero coding skills. The first prototype appeared in 20 minutes. But a prototype is not a product.

To build a real product, I needed a backend. A server. A database. APIs. Authentication. All the things that “real developers” spend months building.

I didn’t know what any of those words really meant.

This is the story of how AI built it all in 7 days — and the spectacular failures along the way.

Day 1: The Blank Terminal

I remember the exact moment. I opened Claude Code — Anthropic’s AI coding assistant that runs in the terminal — and stared at the blinking cursor. I had one question in my head: “How do I even start?”

Here’s what I actually typed:

Me: I need a backend for a Telegram Mini App that shows

financial market data. It should use EODHD APIs to fetch

real-time stock prices, crypto, forex. Users need to be

able to create a watchlist and see live prices. The backend

should be in Python because that’s what our server runs.

I didn’t know what “async” meant. I didn’t know what “aiohttp” was. I didn’t know the difference between GET and POST requests.

Claude Code didn’t care. It asked me a few clarifying questions — “Do you want WebSocket support for real-time updates?”, “What database would you prefer?” — and I answered in the only language I know: business requirements.

Me: I just want prices to update when users open the app. For the database,

whatever is easiest. We have PostgreSQL on the server.

Claude Code: I’ll use aiohttp for an async web server,

PostgreSQL with asyncpg for the database, and HTTP

polling for price updates. Let me set up the project

structure...

Within 15 minutes, it had created:

bot/main.py — the entry point that starts both the Telegram bot and the web server

bot/api.py — the web server handling HTTP requests

bot/database.py — all PostgreSQL database operations

bot/config.py — configuration and environment variables

requirements.txt — Python package dependencies

I didn’t understand a single line of the code. But I understood the file names. And when Claude Code said “try running it,” I copied the command it gave me, pasted it into the terminal, and…

ModuleNotFoundError: No module named ‘aiohttp’

My first crash. It took AI exactly 4 seconds to explain that I needed to install dependencies first. pip install -r requirements.txt. Five minutes later, the server was running.

Day 2: Connecting to EODHD APIs

The skeleton was up, but it was empty. No data. I needed to connect it to the treasure trove I was sitting on: EODHD APIs data.

Here’s where working at EODHD APIs became my unfair advantage. I knew what data existed, even if I couldn’t code the integration myself. So my prompts were specific about what I wanted, vague about how to get it:

Me: Connect to EODHD APIs real-time endpoint. The URL

format is https://eodhd.com/api/real-time/{SYMBOL}?api_token=KEY

For example, AAPL.US gives Apple stock price. I need it

to return: price, change, change_percent, volume,

open, high, low. Also need crypto like BTC-USD.CC and

forex like EURUSD.FOREX.

Claude Code built an API proxy layer. It wrote functions that:

Accept a ticker symbol from the frontend

Call the EODHD APIs real-time endpoint

Parse and normalize the response

Cache it briefly to avoid hitting rate limits

Return clean JSON to the frontend

Then it did the same for fundamentals. And historical data. And news.

EODHD APIs Data Connected in Day 2

Real-time prices — stocks (150,000+ tickers across 70+ exchanges), crypto (3,000+), forex (150+ pairs)

Fundamentals — Balance Sheet, Income Statement, Cash Flow for every public company

Historical OHLCV — daily, weekly, monthly price data going back decades

News — real-time financial news with sentiment scores

All from a single EODHD APIs key. One key, all data types.

By the end of Day 2, I could open the app in Telegram, see my watchlist, and every ticker was pulling live prices from EODHD APIs. Stocks in green, crypto ticking up, forex rates updating. It was mesmerizing.

Real-time watchlist: stocks (AAPL, TSLA, MSFT, GOOGL), crypto (BTC, ETH), forex (EUR/USD, GBP/USD) — all streaming from EODHD APIs through the AI-built backend.

Day 3–4: The Spectacular Crashes

This is the part nobody talks about in the “AI builds everything!” hype articles. Things break. Constantly.

Here’s my actual crash log from those two days:

Crash #1: CORS — The Invisible Wall

The backend worked perfectly when I tested it from the server. But when I opened it in Telegram’s browser, nothing loaded. Blank screen. No data.

I had no idea what “CORS” meant. I described the problem to Claude Code:

Me: The app works when I test on the server but shows no

data in Telegram. The browser console shows some error

about “Access-Control-Allow-Origin”. What is this?

AI explained it was a browser security feature and wrote nginx proxy rules that solved it. Total time: 8 minutes.

Crash #2: The Demo Key Problem

I wanted the app to work for anyone, even without an EODHD APIs key. So I set up a “demo mode” with a shared API key. Sounds simple. Except:

Error: API rate limit exceeded (429) Every demo user was hitting EODHD APIs directly, burning through the shared key’s quota in minutes.

AI rebuilt the architecture: demo users go through a backend proxy with aggressive caching (5-minute window for real-time, 1 hour for fundamentals). API key users bypass the proxy and hit EODHD APIs directly with their own quota. This single decision saved the entire demo experience.

Crash #3: The Deployment That Broke Everything

My build process uses sed to replace a placeholder API key with the real one. One afternoon, the entire app stopped working. No prices, no news, nothing.

Turns out, sed replaces every occurrence of the placeholder string — including inside conditional checks. A function that was supposed to check “is this the demo key?” was now checking for the real key, making every user appear to be a demo user.

This took me and AI 3 hours to figure out. The fix was one line. I learned that day that in software, the hardest bugs are the ones that happen silently.

Day 5–6: The Backend Grows a Brain

The basic data pipeline was solid. But I wanted more. I kept asking for features the way a product manager would:

Me: Users should be able to register with their email.

Save their watchlist to the database so it persists.

Add a settings page where they can connect their own

EODHD APIs key for premium data access.

Me: I need an admin panel. I want to see how many users

registered, who’s active, what tickers are popular.

Make it at /admin/ with basic authentication.

Me: Add an API proxy that lets demo users access any

EODHD APIs endpoint through our backend, with caching.

The format should be /api/eodhd/proxy/{path}.

Each request took Claude Code between 5 and 30 minutes. No discussion about story points. No sprint planning. No standup meetings. Just: describe what you need, get working code, test it, move on.

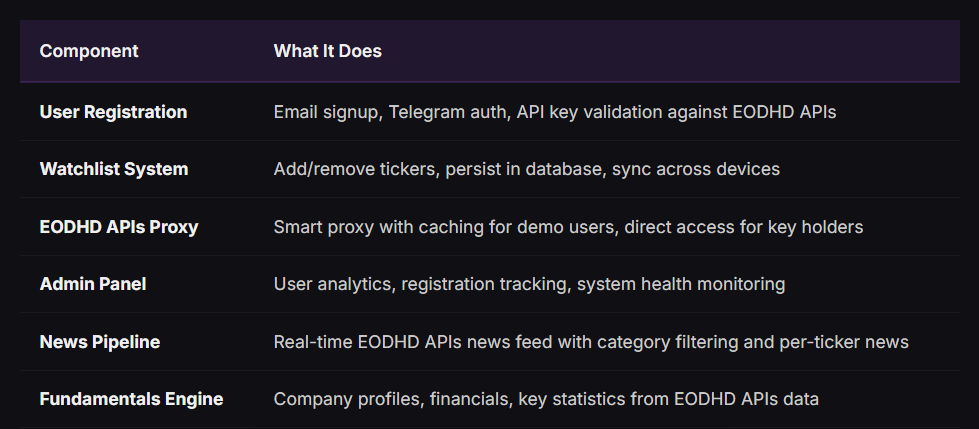

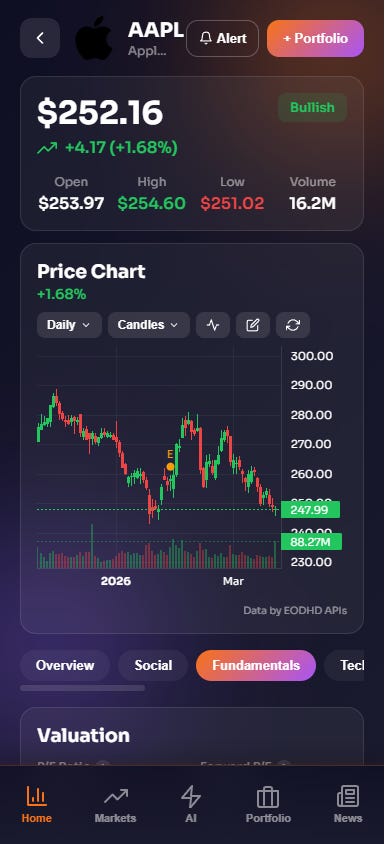

By Day 6, the backend had:

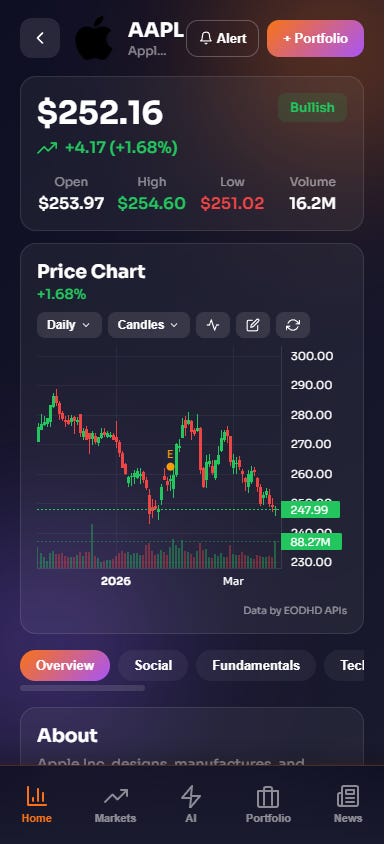

Stock detail view for Apple (AAPL): candlestick chart, real-time price, OHLCV data, sentiment indicator — all fetched through the AI-built backend from EODHD APIs.

Day 7: The Architecture That Survived

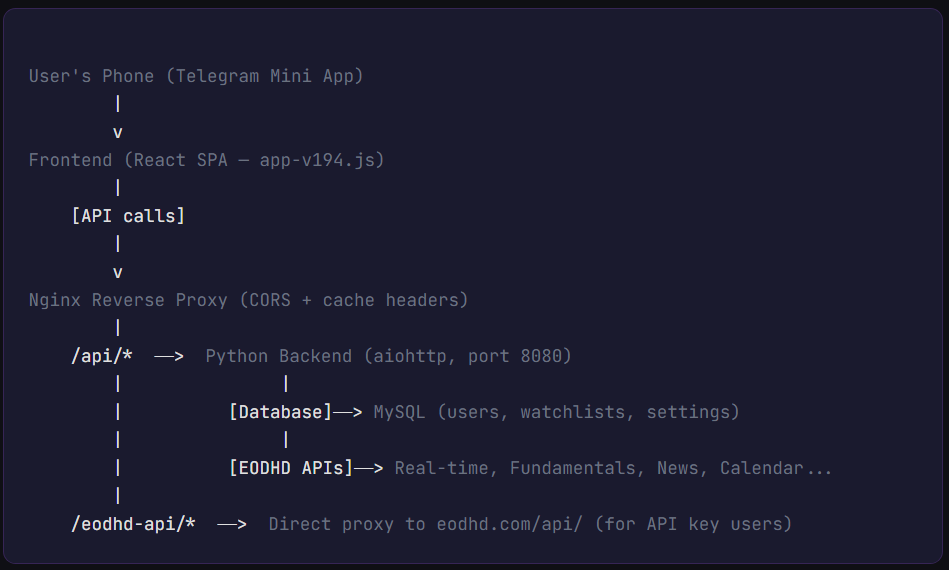

On Day 7, I asked Claude Code to explain what it had built. I wanted to understand the architecture, at least at a high level. Here’s what it drew for me (simplified):

The entire backend is a single Python process running on a $24/month DigitalOcean droplet (4GB RAM, 2 vCPUs). It handles all API routing, data caching, user management, and serves as the bridge between the frontend and EODHD APIs.

Is this how a team of senior engineers would build it? Probably not. They’d use microservices, Kubernetes, Redis, message queues. They’d spend 3 months on architecture alone.

But my architecture works. It’s been running for a month with zero downtime. And it cost me $0 in developer salaries.

The Prompt That Changed Everything

Around Day 5, I discovered something that 10x’d my productivity. Instead of asking for one feature at a time, I started writing what I call “business requirement prompts” — multi-paragraph descriptions of what I wanted, written the way I’d write a product spec for a dev team:

Me: We need a subscription and token system. Here’s the

business logic:

- Free users get 5 AI requests per day, limited watchlist,

demo data for non-popular tickers

- Users can buy tokens with Telegram Stars (in-app currency)

or Stripe (credit card)

- Token packages: Starter (75 stars), Basic (150),

Pro (375), Power User (1150)

- Each AI feature costs different tokens: Investment Thesis

costs 2 tokens, Quick Analysis costs 1

- Track token balance, purchase history, usage per feature

- Admin should see revenue, conversion rates, popular packages

Build the complete backend: database tables, API endpoints,

Stripe webhook handler, Telegram Stars payment handler,

token deduction on each AI call, and admin dashboard

integration.

Claude Code took that single prompt and built the entire payment system. Database schema. API endpoints. Webhook handlers. Token balance tracking. Admin revenue dashboard. It took about 45 minutes.

A payment engineer would charge $20,000+ for this work. And it would take weeks, not minutes.

What I Learned About Talking to AI

After 7 days of non-stop building, I’d discovered a formula. The quality of AI-generated code is directly proportional to the quality of your business description. Here are my rules:

Nick’s Rules for AI-Driven Development

Describe the “what” and “why,” never the “how.” I never said “use a HashMap.” I said “users need to find their watchlist instantly.”

Include edge cases in business terms. Not “handle null pointer exceptions” but “what happens if a user has no watchlist yet?”

Give real examples. “AAPL.US returns Apple stock. BTC-USD.CC returns Bitcoin. EURUSD.FOREX returns euro-dollar rate.”

Batch related features. Instead of 10 small prompts, write one comprehensive spec. AI produces more cohesive code when it sees the full picture.

Let AI pick the technology. I never once chose a library. Claude Code chose aiohttp over Flask, asyncpg over psycopg2, and it was right every time.

The Numbers After 7 Days

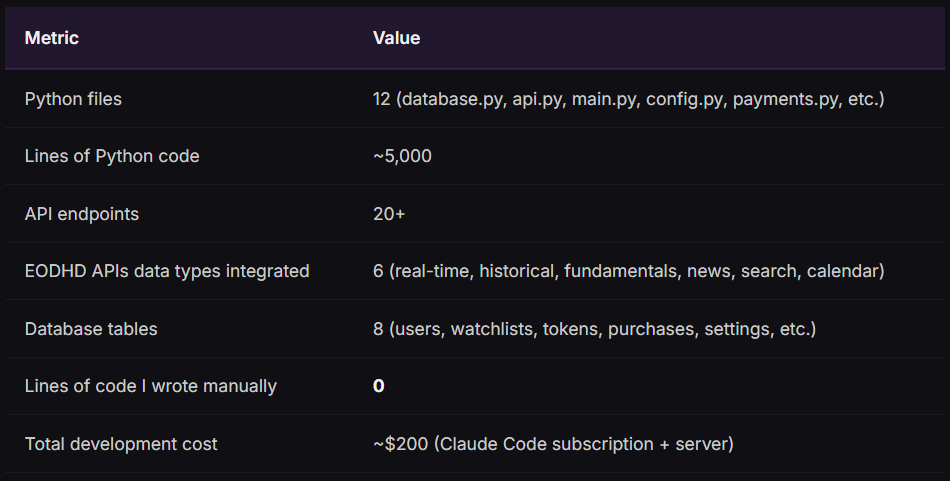

Let me be specific about what the AI-built backend could do after one week:

One month later, those numbers grew dramatically. The backend now has 40+ endpoints, 2,500+ lines of database code, handles AI chat relay to multiple providers, runs a backtesting engine, manages cross-platform data sync, and processes payments through both Stripe and Telegram Stars.

But the foundation — the architecture, the EODHD APIs integration, the user system — was all built in those first 7 days.

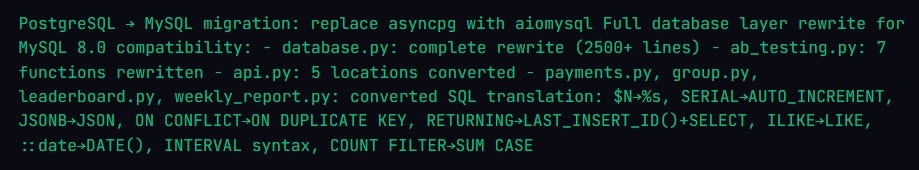

The Database Migration: AI’s Most Impressive Feat

Three weeks in, I needed to migrate from PostgreSQL to MySQL. Different SQL dialects, different connection libraries, different everything. In traditional development, database migrations are projects that take weeks and break things.

I typed one prompt:

Me: Migrate the entire database layer from PostgreSQL

(asyncpg) to MySQL (aiomysql). Change all SQL syntax:

$1 to %s, SERIAL to AUTO_INCREMENT, JSONB to JSON,

ON CONFLICT to ON DUPLICATE KEY, RETURNING to

LAST_INSERT_ID. Update all files.

Claude Code rewrote 2,500+ lines of database code across 8 files in a single session. Every query converted. Every parameter style changed. Every PostgreSQL-specific function replaced with MySQL equivalents. It even wrote a migration script to move user data from the old database to the new one.

The commit message tells the story:

Total time: about 2 hours. A database migration specialist would charge $15,000+ for this work.

Why EODHD APIs Made This Possible

I want to be honest: a huge part of why this worked is EODHD APIs itself. When you’re building a fintech data app, 80% of the complexity is getting the data. With EODHD APIs, I didn’t have to:

Integrate with 5 different data providers for stocks, crypto, forex

Handle different authentication schemes for each provider

Normalize data formats from incompatible sources

Maintain separate rate limit logic per provider

Deal with different pricing tiers across vendors

One API key. One consistent format. 150,000+ tickers. Stocks, ETFs, crypto, forex, fundamentals, technicals, news, calendar, insider trading, options, sentiment — everything from one source.

This meant AI could focus on building the application logic instead of wrestling with data plumbing. Every EODHD APIs endpoint follows the same pattern: /api/{endpoint}/{symbol}?api_token=KEY. Once AI learned that pattern for real-time prices, it could replicate it for fundamentals, news, calendar, and everything else in minutes.

Fundamentals tab: Valuation (P/E, Forward P/E, PEG, Price/Book, EV/EBITDA), Profitability, Dividends — all from EODHD APIs fundamental data, rendered by an AI-built frontend.

What This Means for Non-Developers

If you’re a business person reading this, here’s the honest truth about building software with AI in 2026:

It works. Not perfectly. Not without frustration. But it works well enough to build a real product that real users pay for.

You don’t need to learn Python. You don’t need to understand databases. You need to understand your business domain. What data do your users need? What workflows should the app support? What happens when something goes wrong?

If you can write a clear product spec, you can build software with AI.

The backend I described in this article — with real-time EODHD APIs data streaming, user authentication, payment processing, and an admin panel — would have cost a minimum of $150,000 from a development agency and taken 3–4 months.

It took 7 days and cost me my Claude Code subscription.

See This Backend in Action

Every price, every chart, every piece of data you see in the app flows through the backend described in this article.

The backend worked. Data was flowing. But the UI looked like a spreadsheet from 1997. I had no designer, no budget for one, and zero CSS skills. What happened next surprised even me — AI doesn’t just write code, it designs.

Next: AI Designed My App’s Interface. Designers Wanted to Know Who I Hired.